Programmers have taught AI platform IBM Watson to learn the meaning behind expressions, fan reaction and body language, allowing Wimbledon to get highlights from Centre Court online faster than ever before.

For two weeks every summer, the centre of the tennis world is in the London suburb of Wimbledon. Millions of tennis fans follow the action. They want scores, they want player and tournament information. And they want the highlights.

With an average of three matches per day on each of the six main show courts, hundreds of hours of video can quickly mount up. It could take hours to pull together highlight packages.

But with AI platform IBM Watson, the All England Lawn Tennis Association (AELTA) is able to assemble full highlight reels of live events within five minutes of the end of a match, some two to 10 hours more quickly than before.

It does so using cognitive highlight clipping, in which the AI watches and analyses the main broadcast feed and applies a variety of APIs to identify key moments.

The functionality first appeared, in beta, during the 2017 Master’s golf tournament, was trialled at Wimbledon last year and refined again at the 2017 US Open tennis slam. It will be an ongoing process, IBM’s pact with major sports rights holders being as much about its own development of the product as is it about benefitting the event’s production.

The system will have clipped over 75,000 points from close to 1000 hours of coverage of men and women’s singles matches during the course of this year’s Championships.

“This year we have slashed turnaround time for highlights generation from 45 minutes to 5 minutes,” explains IBM Client Executive Sam Seddon. “The system is more accurate and we’ve improved the dashboard (user interface) in terms of search and download of clips and we expect these to continually develop.”

“This year we have slashed turnaround time for highlights generation from 45 minutes to 5 minutes.” Sam Seddon, IBM

Automated production

There are three levels of production deployed at Wimbledon this year. There’s the conventional – all manual – workflow; a highlights workflow where machine learning assists editorial in their craft decisions; and a fully automated AI highlights assembly line with almost no human intervention.

These automated clips lasting around 2.5 minutes each are found on Wimbledon’s digital channel Wimbledon.com and clipped into chunks for social media.

“The AI clips are served into a content management system for the editorial team to decide whether they want to publish,” says Seddon. “The AI creates much more than is actually published.”

Seddon explains that the system uses deep learning models and ‘self-supervised’ active learning techniques to recognise which of these points are significant and therefore to understand what makes a good highlight.

“It understands the importance of certain scores - like a point that clinches a set - and uses visual and audio cues to create ratings for each point,” he says. “The output ranks exciting moments and auto-curates a highlights package.”

For visual cues, technicians trained Watson using Visual Recognition to identify when a player is performing actions that typically mark an exciting moment in the match—celebrating, waving to the crowd, fist pumps and so on. The system also uses facial recognition to read the emotional reactions of the players.

Visual Recognition also helps the AI divide each match into individual points. It does this by reading the camera placement and zoom which create the scene at the start of each point and knows that that is the place to begin the clip when it comes to assembling reels.

In addition, the AI ingests statistical information from courtside devices used to measure serve speed and ball position. Arming Watson with this information enables things such as particularly powerful serves, which the audience might not have noticed, to still be flagged as moments to highlight.

The system also analyses the statistics that correlate with important moments in a match. Not all winners are equal in impact and the model helps keep focus on the genuine match turning points and defining moments.

“AI will become a big part of the armoury of a sports broadcaster but the decision point to invest in the technology will be driven by the usual process of weighing the cost of rights against revenue.” Roger Pearce, ITV Sport

Listening to match play

That’s not all. IBM’s team worked with MIT to develop a deep neural network called SoundNet for environmental sound analysis of crowd noise.

IBM explains that in sports like golf and tennis the comparative hush of the match play is punctuated by sound from the fans, player and commentator. An uproar of noise is a great indicator of a “very interesting” highlight. The additional cheering from the player and commentator add to the magnitude of excitement. Each sound file is ranked, with the numerical understanding about the sound based on being trained on millions of archive video clips.

“Watson can hear the roar of the crowd and interpret what’s happening, by the fan’s reaction,” explains Seddon.

The technology is apparently smart enough to discern a polite handclap or an ‘ahh’ from a genuinely dramatic roar and input that into the equation.

“We could train the AI on everything the commentator says (which is what Watson’s application does for golf) but here we have 15000 people on Centre Court telling us whether it’s a good shot.”

All the data from the visual, audio and statistical cues are combined and the clips scored for ‘Overall Excitement’ based on the most dramatic and climatic moments and all points in between.

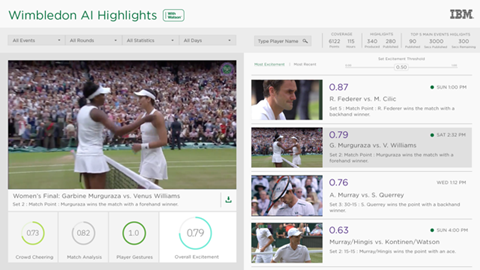

The AELTA’s editorial team have access to the AI-derived highlights through a web-enabled dashboard that runs on the IBM Cloud.

The interface shows a sorted highlight view by overall excitement within a multimedia mosaic explorer. As the producers select a highlight to view, the cut highlight is pulled from Object Storage and played through a web browser.

Along with the video, the AI Highlight rankings from the CMS are displayed in concentric circles. The depicted features from the AI Highlights system include the crowd excitement, player gestures and match statistics, helping the content producers or digital editors to get their story out faster.

“If Roger Federer comes through his next game easily you might want to do a package showing how balletic he is around the court,” says Seddon. “You could go in with this preconceived idea and compile a highlights package using clips suggested by the AI, at the same time as the AI is also building the highlight package of the match. This saving in production time allows Wimbledon to have first mover advantage on both pieces. It’s this efficiency in combination of man and machine that the AI provides.

“Wimbledon have to get it out first – so have that first mover advantage,” he adds. “If you don’t or you are late then other [broadcasters] will beat you to it and being first is what gains traction on social, people share it, it goes viral. Wimbledon needs its channels to stand out.”

The deal with Wimbledon needs to be seen in context of the AELTA’s decision to take responsibility for the production of the Championship’s host broadcast in house for the first time. New division, Wimbledon Broadcast Services, takes over from the long time BBC hosted production under the command of former BBC sports executive Paul Davies.

Tennis Australia made a similar move for the Australian Open in 2015, and the trend as a whole is reflective across the sports industry as rights holders increasingly looking to take greater control over their own content.

For this reason, it’s important to note that the AI is very accurate in terms of recognising active play – rather than just two players wandering around court, it can tell if they are actually playing tennis.

This may seem pretty basic but the ability to be super tight when it comes to clipping is of real benefit to Wimbledon’s rights management.

“Wimbledon sells broadcaster rights to BBC, ESPN and so on but retains rights to show live tennis on some of its own channels including Wimbledon.com and social media channels. They have fixed volume of that footage they are able to use each day. If you think of the volume of clips they put out then the matter of 10 seconds here or there adds up to the difference between whether content can be published or not. So, if I wanted to start a clip from the moment a ball is served versus Raphael Nadal’s routine prior to serving – which is still classed as live footage – then I better clip it tighter. That’s an advance we have achieved this year.”

“Using AI for production and the creation of highlight reels requires a substantial initial investment, but it’s a powerful value-add to the broadcast experience.” Alon Werber, Pixello

What does it cost?

IBM has also a deal with Fox Sports which sees the US broadcaster deploying IBM’s AI tech across a number of sports properties beginning with its coverage of this summer’s World Cup in Russia. Fox is currently offering its viewers the ability to compile custom highlights of past FIFA World Cup matches (from this tournament and matches over decades) for streaming and sharing on social media.

The computing giant is gaining a lot of mileage from promoting its activity around these high profile events and can justifiably be seen as a leader in the field.

IBM declined to disclose the cost of licensing Watson and there is scepticism in the market that applying an AI for most sports today is as cost-effective (or accurate) as is made out. The principal cost lies in training the AI on sufficient relevant data, but as is clear from the Wimbledon example, a team of editorial staff are required to craft the AI-derived highlights into publishable packages.

In the assessment of ITV Sport’s Technical Director, Roger Pearce, AI is bound to be a force in multi-platform delivery of sport highlights.

“I would expect it to continue to be introduced via OTT platforms initially where the sensitivity to errors is lower than on linear channels such as ITV where mistakes are often out there on social media very quickly,” says Pearse. “It will become a big part of the armoury of a sports broadcaster but the decision point to invest in the technology will be driven by the usual process of weighing the cost of rights against revenue.”

“While it is tempting to assume that today’s AIs can create entire highlight reels for distribution on their own, the reality is that they still need the assistance of a trained team to work efficiently,” says Andreas Jacobi, CEO at Make.TV which runs live video over Azure, AWS, and Google cloud for clients including Major League Baseball, Fox Sports and esports league ESL. “AI needs to assist the production team in complex and time-critical tasks without putting any of the operations at risk. By combining AI’s ability to streamline the content acquisition and curation process with trained staff and existing workflows, broadcasters can cut their production costs, scale their operations at will, and speed up content creation for multi-platform distribution.”

Likewise, Bevan Gibson, ITN’s CTO believes that the current benefits of using AI to automate the creation of highlights are not worth the risk for tier 1 events.

“However, on lower tier events, particularly those that aren’t currently commercially viable to create highlights for, there is some benefit to be had by using AI and ML techniques to create this type of content at a significantly lower price point,” he says. “That is even the case if there is a risk that the quality may not be as high as would be expected on a premium event, as the alternative is to not provide this highlight at all.”

Alon Werber, CEO of Pixellot, a vendor of automated sports production systems says: “Using AI for production and the creation of highlight reels requires a substantial initial investment, but it’s a powerful value-add to the broadcast experience. As in all innovation, there is a tuition fee to be paid and an initial investment in technology, time and trial and error but that is part of the process. You can’t play in the big leagues without paying your dues and we see it paying off as more clients are producing condensed games and sharing clips.”

1 Readers' comment