UK regulator Ofcom has published a discussion paper exploring the different tools and techniques that tech firms can use to help users identify deepfake AI-generated videos.

The paper explores the merits of four ‘attribution measures’: watermarking, provenance metadata, AI labels, and context annotations.

.jpg)

These four measures are designed to provide information about how AI-generated content has been created, and – in some cases – can indicate whether the content is accurate or misleading.

This comes as new Ofcom research reveals that 85% of adults support online platforms attaching AI labels to content, although only one in three (34%) have ever seen one. Deepfakes have been used for financial scams, to depict people in non-consensual sexual imagery and to spread disinformation about politicians.

The discussion paper is a follow-up to Ofcom’s first Deepfake Defences paper, published last July.

The paper includes eight key takeaways to guide industry, government and researchers:

- Evidence shows that attribution measures can help users to engage with content more critically, when deployed with care and proper testing.

- Users should not be left to identify deepfakes on their own, and platforms should avoid placing the full burden on individuals to detect misleading content.

- Striking the right balance between simplicity and detail is crucial when communicating information about AI to users.

- Attribution measures need to accommodate content that is neither wholly real nor entirely synthetic, communicating how AI has been used to create content and not just whether it has been used.

- Attribution measures can be susceptible to removal and manipulation. Ofcom’s technical tests show that watermarks can often be stripped from content following basic edits.

- Greater standardisation across individual attribution measures could boost the efficacy and take-up of these measures.

- The pace of change means it would be unwise to make sweeping claims about attribution measures.

- Attribution measures should be used in combination with other interventions, from AI classifiers and reporting mechanisms, to tackle the greatest range of deepfakes.

Ofcom said the research will also inform its policy development and supervision of regulated services under the Online Safety Act.

BFI to invest £11.85m in UK skills funding

The British Film Institute (BFI) has pledged £11.85m funding over three years to support skills development and training across the UK.

Telxius selects Synamedia Quortex Switch

Telxius has integrated its carrier-grade content delivery network (CDN) with Synamedia’s Quortex Switch platform, which the companies say enables content providers to dynamically switch between CDNs in real time for the first time.

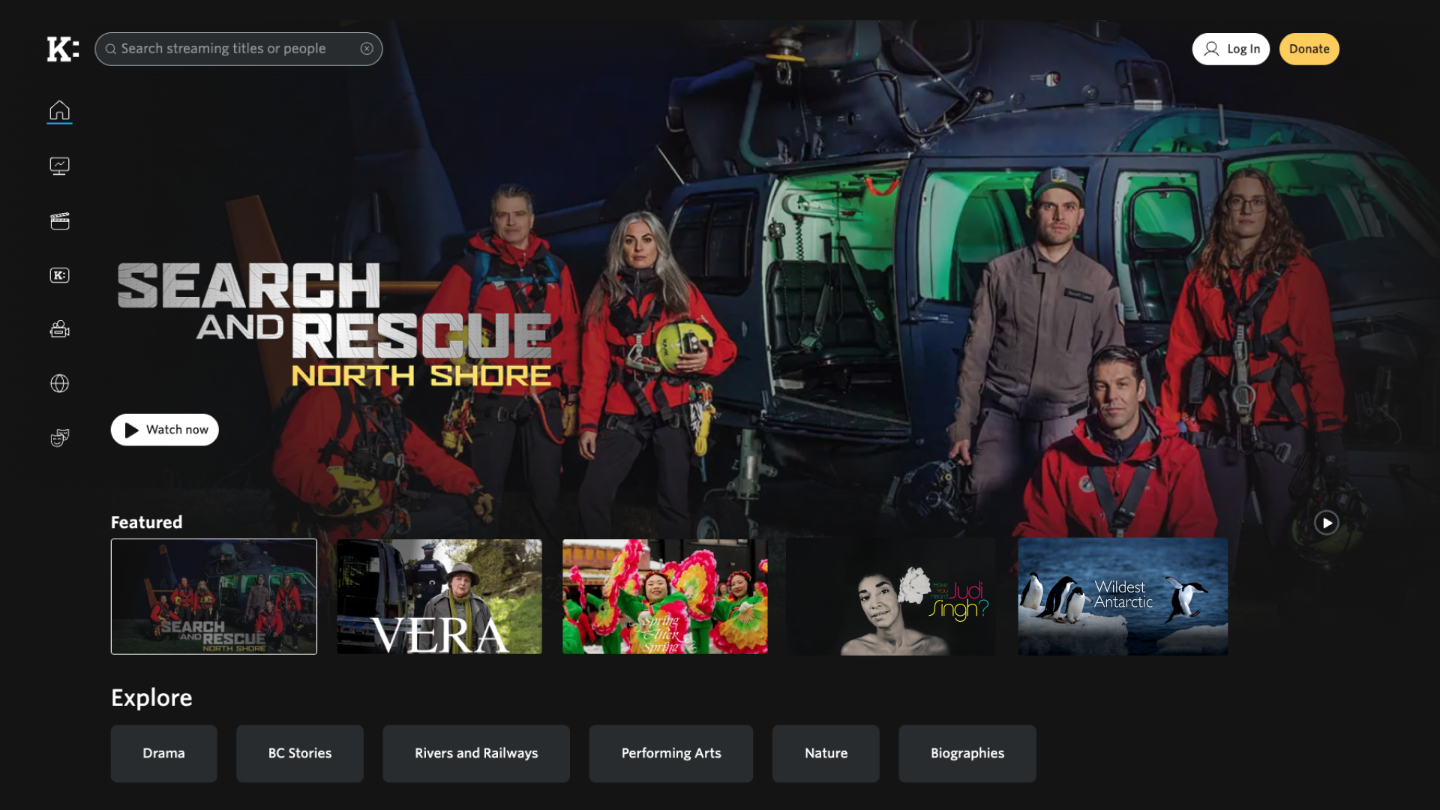

Knowledge Network selects ThinkAnalytics

Knowledge Network, British Columbia’s public educational broadcaster and streaming service, has gone live with ThinkMediaAI to give viewers a personalised TV experience underpinned by intelligent search and content recommendations.

Netflix launches first daily live show The Breakfast Club

Netflix will stream US morning radio show The Breakfast Club daily on its platform from 1 June 2026, marking the streamer’s first daily live programme.

ITV launches Live Addressable+ with Omnicom

ITV, the UK’s largest commercial broadcaster, has officially launched its live broadcast addressable advertising product, Live Addressable+, with an exclusive beta trial in partnership with Omnicom Media Group.

.jpg)