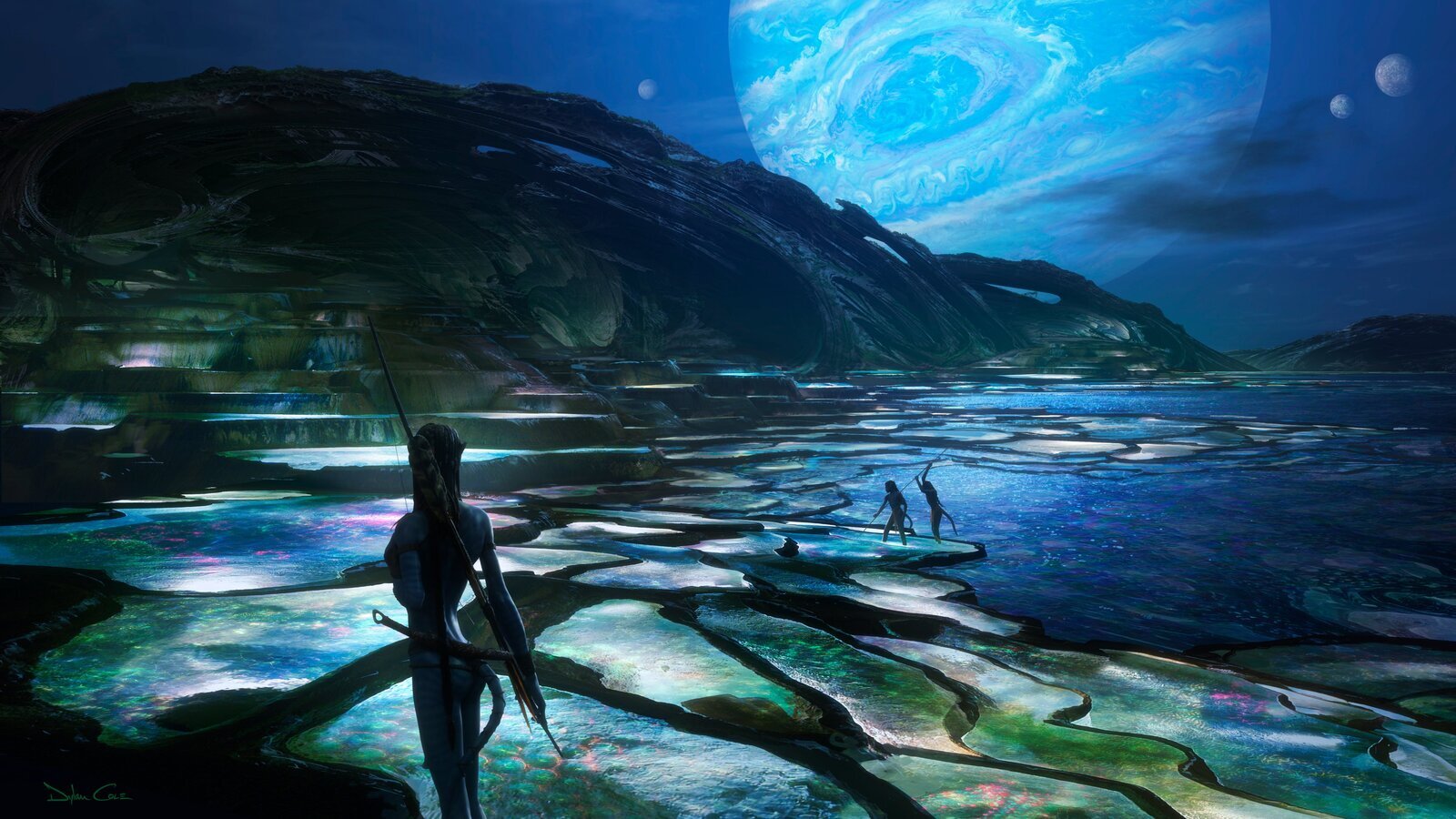

Avatar broke technical boundaries and commercial records in 2009 by bringing digital 3D into the light. Can history repeat itself with James Cameron’s four sequels currently in production?

You are not signed in

Only registered users can read the rest of this article.

Behind the scenes: Eurovision Song Contest 2026

What began as a technical experiment in 1956 is now a global cultural institution reaching approximately 170 million viewers on TV across three live shows and generating billions of views on digital platforms. IBC365 gets a tour backstage in Vienna.

Behind the scenes: Rooster

When Cinematographer Blake McClure signed on to shoot HBO’s new comedy Rooster, he wasn’t looking to reinvent the sitcom; he was looking for a way to stretch the visual language of comedy to integrate the intimacy of large format.

Building Bedlam: “Can I shoot an entire feature film on 65mm?”

Given the chance to shoot the 18th century action-drama Bedlam, Cinematographer James Butler crafted a daring project that merged medium-format photography with cutting-edge digital cinema on a customised Blackmagic URSA Cine 17K 65.

Behind the scenes: Undertone

While looking after his dying parents, VR Filmmaker Ian Tuason became obsessed with demonic possession stories, which planted the seed for the uniquely creepy sound design of his first feature.

Crafting suspense: “We wanted to raise the bar again”

Nella Mente di Narciso’s Director of Photography, Gianluca Braccieri, reveals the digital film cameras, custom lighting setup, and end-to-end post-production software the team leveraged to achieve the tension and mystery of the Italian crime documentary series’ highly anticipated third season.