IABM Lead Research Analyst Lorenzo Zanni takes an in-depth look at how increases in processing power and storage capabilities are broadening Artificial Intelligence applications throughout the broadcast, media and entertainment industry.

Once confined to science fiction, AI technology has benefited from the exponential increase in processing power and storage capabilities as well as the rising amount of information made available by the internet revolution - this made a vast amount of “big data” available to ML algorithms. AI applications in the broadcast and media industry, which sprang up between 2016 and 2017, have grown further in 2018.

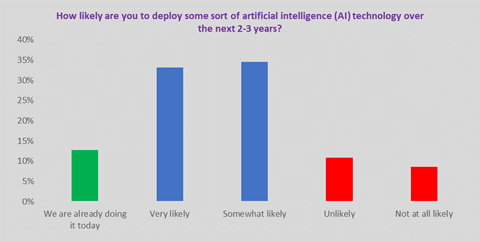

According to IABM data, AI adoption in broadcast and media is still at an early stage as 13% of respondents to the latest Buying Trends Survey said that they have already deployed some sort of AI technology. However, adoption has greatly increased from the 2% reported in the previous survey.

Looking at AI adoption using IABM’s new industry model, the BaM Content Chain, shows that Manage is the most popular for likely deployment of AI at 34%. Produce at 31% and Support at 26% are respectively in the second and third place. Store and Connect are at the bottom with only 12% and 13% of end-users likely to deploy AI technology.

Looking at AI adoption in each part of the content chain shows how this emerging technology is helping broadcasters automate operations and gather insights into their audiences.

Create

At the beginning of the content chain, AI has the potential to automate parts of the creation process. Although these are creative workflows, they are also made up of routine tasks that could be automated to liberate resources for worthier creative purposes.

Some media companies have also shown great interest in using AI to automate camera operations. For example, in 2016, Disney announced that it was working on improving its automated camera operators to cover more basketball and soccer games.

Nikon also started to provide automated camera solutions that use AI in 2018. MRMC, a company owned by Nikon, introduced its Polycam Chat solution at NAB Show 2018. AR NAB Show 2018, Mobile Viewpoint announced the launch of VPilot, an AI powered live production solution.

These solutions can help broadcasters and content creators cover more events at a fraction of the cost. This is particularly appealing in sports production where demand for content is growing.

It is worth highlighting that AI tools are already being used to drive programming decisions at companies such as Netflix - that is, deciding what TV shows and movies to invest in. Netflix uses AI to produce different trailers for TV shows/movies to target disparate audience profiles.

Produce

As in Create, AI can also automate operations in Produce.

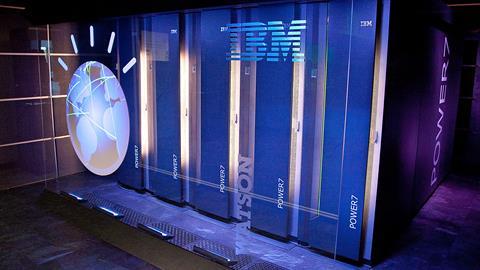

AI has already been used by IBM to produce highlights at the 2017 US Open tennis tournament. IBM Watson’s cognitive algorithms were used to identify key moments of each game – Watson was taught by IBM researchers to spot signals of noteworthy moments, such as players’ celebrations and the level of fans’ noise. Watson was also taught the various stages of a tennis game to understand the level of importance of a single point, such as a break or match point.

Adobe collaborated with Stanford University to develop a program that automates parts of the editing process through AI tools. This software, which focuses on dialogue-based video, matches script and footage using various techniques to analyse video (e.g. image recognition, emotion recognition etc.). When this stage is completed, the editor can choose a certain style to edit the video – for example, using a specific camera angle when a certain character speaks in a shot. This speeds up the editing process by automating the video analysis element while leaving the creative choices to the editor.

At the 2018 SMPTE Annual Technical Conference, Norman Hollyn, an editor and professor at the University of Southern California, focused on how AI can help producers better localise content during the editing process. Training the AI algorithms with country-specific data enables producers to automatically replace objects in scenes to suit local tastes. This is particularly relevant as the broadcast and media marketplace becomes more globalised.

Manage

Manage is the natural area of application for AI technology. The process of tagging content is very manual and expensive; therefore, it is natural to replace it with appropriate AI technology. By increasing the level of detail of the metadata, search on content management systems becomes more precise, thus boosting monetisation opportunities.

The number of companies providing broadcasters and content owners with AI technology tools to tag their content efficiently is already quite high - leading providers in this area are companies like GrayMeta and Veritone as well as cloud service providers such as Google, Amazon and Microsoft, who won IABM’s top innovation prize in 2018 – the Peter Wayne Golden BaM Award with its Video Indexer technology.

Some media technology suppliers offering asset management systems have partnered with these companies to provide their customers with AI tools. For example, Dalet partnered with Veritone while SDI Media partnered with GrayMeta, while Valossa’s video recognition and intelligence platform was integrated with Avid MediaCentral at NAB 2018.

The data created and classified by AI algorithms can then be repurposed for monetisation workflows. At the 2017 Creative Storage Conference, GrayMeta claimed that implementing a high-quality metadata search solution within a media organisation can reduce search time by 54%.

Publish

As video delivery networks move to the cloud to leverage its greater elasticity and scalability, AI technology is increasingly being used to predict the increases in resource requirements to reduce delays in delivery. For example, in live sports events or launches of new TV series, AI is already learning to predict the resulting increased strain on a video delivery network and thus it can automatically scale up resources available to all of the video pipeline.

When it comes to video content preparation for delivery, AI technology already has several fields of application such as enhanced search and discovery, smarter filtering and compliance, increased accessibility and smarter ways to package and promote content. By making video searchable, media companies can improve content discovery, increase operational efficiency, deliver higher advertising revenues and eventually improve viewer engagement.

In 2017, for instance, Zone TV launched 14 AI-powered OTT Channels known as Zone TV Dynamic Channels. These linear-style channels offer multiple program choices to viewers, enabling them to personalise their experience.

Another important area of application for AI is content protection. In 2016, NBC Universal revealed that it was using AI as an anti-piracy tool to track peak periods of illegal P2P downloading.

Monetise

In Monetise, AI can help optimise revenue-generating activities such as advertising.

Turner was the first broadcast and media company to apply IBM’s Watson technology to ad sales in February 2016. Turner decided to adopt Watson to develop a cognitive technology for ad sales and power its recommendation engine for advertisers. According to IBM, Watson is used by broadcast and media customers to examine social posts, online feedback and images – this is important in building audience profiles.

In 2017, a French broadcaster, the M6 Group, started to use emotional targeting – with the AI engine targeting viewers with specific advertising depending on their emotions at a particular moment.

Channel 4 has also been investing in AI technology. The publicly-funded UK broadcaster created an in-house Data Planning & Analytics team in 2011. Since then, it has been developing machine learning techniques for customer segmentation and targeted advertising.

Consume

In Consume, AI can be used to drive personalisation.

In 2015, YouTube was an early adopter of AI-powered personalisation – it started using technology from Google Brain (Google’s AI division) to power recommendations on its platforms. This meant that the company moved to unsupervised learning making it suddenly possible to find relationships between different viewing preferences that could not be spotted and labelled by humans.

Pay-TV operators such as Sky, Telefonica and Comcast are investing in AI to improve relationships with their customers to reduce churn. For example, Sky has started using AI in product recommendations and in some other customer interactions since 2017. In this process, it has collaborated with Thematic, an AI startup, to analyse customer feedback forms with AI technology.

Connect

In Connect, AI can be used to optimise network performance and power other critical activities such as compression.

Netflix already uses AI and advanced analytics tools to optimise the performance of its CDN, Open Connect. With regards to this, AI tools can predict usage and automatically scale resources up or down in a cloud-based CDN environment such as Amazon Cloudfront. This is useful to minimise delay and better budget for costs.

Netflix, which is always at the forefront of AI deployment, has developed a method called Dynamic Optimiser to improve streaming quality in regions that have internet bandwidth issues. This compression method uses AI to analyse the images’ characteristics and optimise video quality on the basis of some constraints.

In 2018, many encoding vendors incorporated machine learning functionalities into their codecs. Codecs powered by machine learning can use information such as historical data, human reaction data and video characteristics to optimise encoding - i.e. same or higher quality for lower bitrate.

Store

In Store, AI can act as an optimiser of storage infrastructure.

This is an application that has been seen in other industries experiencing an escalating demand for storage capacity, but surprisingly less so in broadcast and media. In other verticals, AI has been incorporated in storage management solutions to predict demand for storage resources and plan accordingly. This is a potential development for current storage solutions as media companies look at managing their content supply chains as if they were factories.

Critically, however, AI can also act as an additional driver of demand for storage capacity. With regards to this effect, AI algorithms can produce significant (and actionable) results only if they are fed with large datasets. This imposes an additional burden on storage systems that often can be met only through cloud provisioning of resources. This is another example of how AI deployments are often accompanied by cloud-based resource provisioning.

Support

In Support, AI has the potential to augment current solutions by automating expensive tasks.

In some cases, these tasks are so expensive and time-consuming that they are not even carried out by broadcast and media organisations. From this perspective, AI can lead media technology users to take advantage of some untapped benefits.

In quality control, vendors such as Interra Systems and Mediaproxy have incorporated AI functionalities into their offerings in 2018 to enable automated content tagging for compliance classification and exception-based monitoring. In network monitoring, in 2018 Skyline launched DataMiner, a product that uses AI to augment the management of broadcast infrastructures.

The role of AI in cybersecurity is akin to that in monitoring. In fact, AI algorithms can be fed with a variety of operational information such as user behavior and network data to automatically detect anomalies and alert human operators. Thus, AI can be used as a threat detection tool to augment cybersecurity capabilities in an efficient way. This task, which could not be carried out by humans, gives broadcast and media organisations the possibility to take advantage of an additional tool against cyber-attacks.

IABM Media Tech Trends Reports

IABM members can access the Media Tech Trends Reports on AI, Cloud, Blockchain and Immersive Experiences on the IABM website. These provide a detailed analysis of adoption and deployment of these emerging technologies in the broadcast and media sector’s content chain.

No comments yet