Pitches for the shortlisted projects on the IBC Accelerators Innovation Programme were presented in a quick-fire round in front of an international M&E audience at the Kickstart Day on 6 March.

The programme, which offers a year-round cycle of engagement, invited Champions and Participants of each of the 12 projects to present the common challenges they are addressing, what they hope to solve, and how their innovations will help the widespread industry now and in the future. The opportunity allowed each pitcher to share who they already have involved, who they want to collaborate with, and/or which specific vendors or sectors they want to reach.

Commenting on the importance of IBC Accelerators programme, sponsor John Canning, Director of Developer Relations - Creators, at AMD, described one of the key strengths as “doing things that live beyond the tradeshow.” AMD is returning as sponsor this year and will help support a whole load of new workflows as the systems evolve...

You are not signed in

Only registered users can read the rest of this article.

Spatial computing: “Instead of showing people a story, you’re letting them inhabit it”

Leveraging generative AI, computer vision, and data from real environments, spatial computing has opened the door for cutting-edge systems that blend the physical and digital worlds into a new frontier of human-technology interaction.

NAB preview: Automation, reinvention and politics to steal the show

NAB 2026 looks set to bring a raft of creativity and technological innovation, yet serious political and environmental questions remain.

How vertical video became the new frontline for live sports

Live sports entertainment remains the most powerful driver of real-time engagement in media, but the format through which it’s delivered is rapidly evolving.

From green screen to Unreal worlds: The tech stack driving virtual production

As broadcasters and content creators embrace in-camera VFX and data-driven workflows, a new technology stack is redefining what can be achieved on set and who can afford to achieve it. Framestore’s Connor Ling explores the possibilities of this evolving ecosystem.

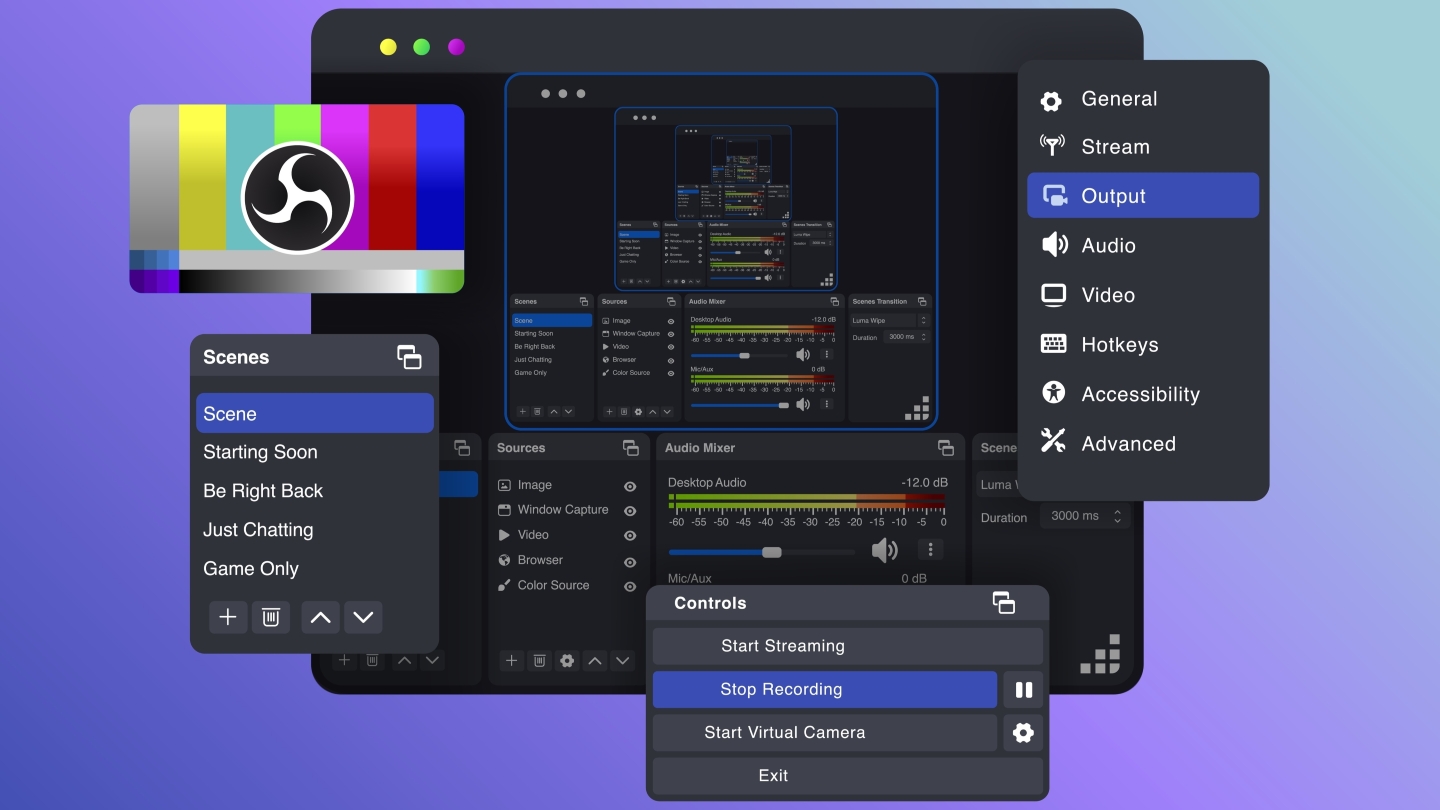

Software studios: How inevitable is fully software-defined production?

With the rise of free, high-quality media tools, physical broadcast production hardware is looking less and less essential. IBC365 investigates.

.jpg)